Neural Field 3458408641 Apex Prism combines neural field theory with modular apex prism architectures to model spatially structured dynamics. It emphasizes time-sensitive processing via adaptive kernels and locality-preserving updates for streaming inputs. The system fuses vision, audio, and proprioception into a single, latency-aware pipeline with calibrated fusion weights. Its transparent metrics and scalable coordination aim at robust autonomous performance across domains, but practical tradeoffs and deployment considerations remain to be clarified as complexities unfold.

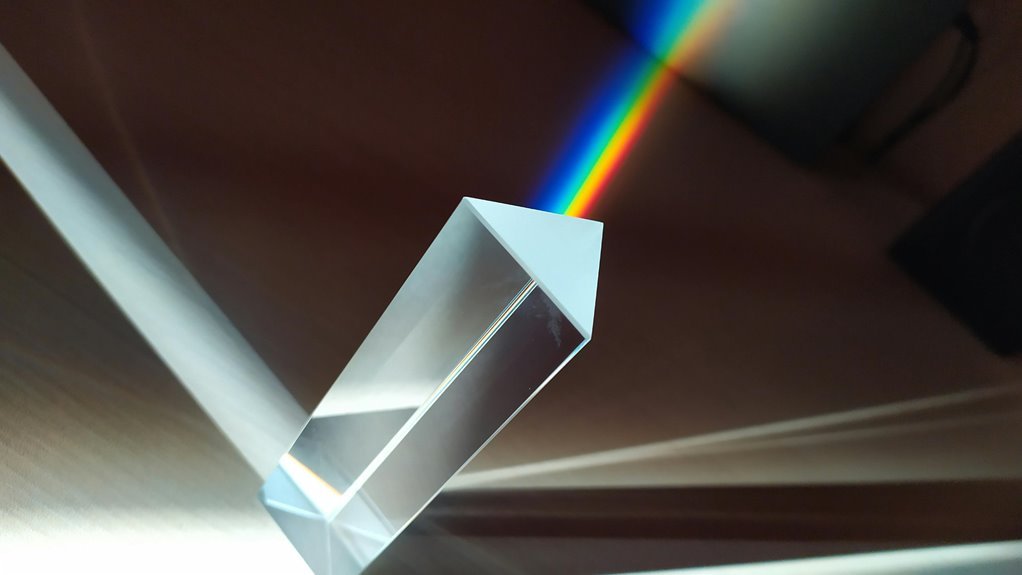

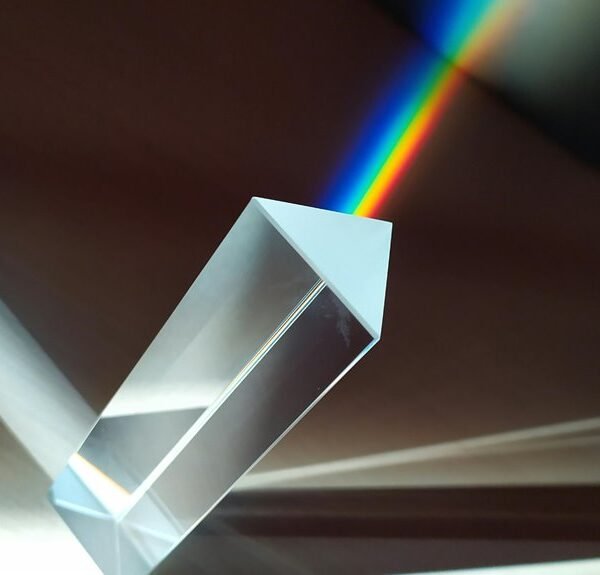

What Is Neural Field Apex Prism and Why It Matters

Neural Field Apex Prism refers to an advanced modeling framework that integrates neural field theory with prism-like modular architectures to capture spatially structured neural dynamics and high-resolution representations. This framework clarifies how neural field interactions inform structured activity, while apex prism modules enable scalable, interpretable coordination. Time dynamics and multi modal fusion are analyzed to reveal robust, freedom-aligned insights into neural computation.

How the Prism Architecture Handles Time-Sensitive Dynamics

How does the Prism architecture manage time-sensitive dynamics within neural field-inspired computations? The design encodes temporal context via adaptive kernels and locality-preserving updates, enabling continuous recalibration of activity patterns. It supports real time inference by streaming inputs and maintaining stable representations, mitigating drift. Quantitative benchmarks show robust responsiveness to rapid changes, with bounded latency and predictable memory footprint under diverse workloads.

Multi-Modal Data Fusion in Practice for Real-Time Inference

Multi-modal data fusion for real-time inference integrates heterogeneous streams—such as vision, audio, and proprioceptive signals—into a unified, temporally coherent representation. The approach emphasizes modular pipelines, calibrated fusion weights, and latency-aware architectures. Conceptual clarity guides metric selection, while data governance ensures provenance, privacy, and reproducibility. Practitioners balance autonomy with accountability, achieving robust, real-time inference without sacrificing interpretability or methodological rigor.

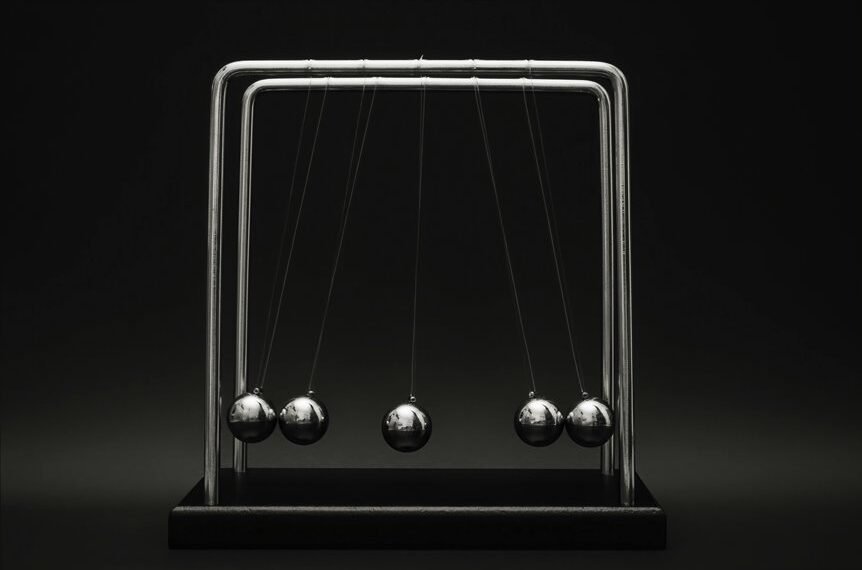

Applications and Impact: Autonomous Systems, AR/VR, and Science

What concrete gains emerge when systems fuse diverse sensing modalities to operate autonomously, render immersive experiences, and advance scientific discovery? Neural fields enable robust autonomous navigation and fault tolerance through data fusion of multimodal cues.

Apex prism characterizes integrated perception across time dynamics, accelerating experimentation and AR/VR realism. This approach yields transparent metrics, scalable architectures, and adaptable platforms for science-driven freedom.

Conclusion

The Neon Field Apex Prism demonstrates a methodical integration of spatiotemporal neural representations with modular, latency-aware fusion. Empirical signals suggest robust time-sensitive processing and drift resistance across multi-modal streams, supporting reliable real-time inference in navigation and AR/VR. While the theory predicts resilient, scalable performance, independent validation remains essential to confirm generalization. If confirmed, this architecture could redefine adaptive perception pipelines, enabling transparent metrics and synchronized autonomy in complex, dynamic environments.